…and it always will be!

Seriously, first, let’s be clear: my opinions do not matter! X-Plane is a small program in a large market (game/graphics hardware) and as I’ve said before, flight simulators are not the early adopters of new tech. So (and this is a huge relief to me) I can do my job without correctly predicting the future of computer graphics.

Keep that in mind as I mouth off regarding ray tracing – I’m just some guy throwing tomatoes from the balcony. X-Plane doesn’t have skin in the game, and if I prove to be totally wrong, we’ll write a ray tracer when the tech scales to be flight simulator ready, and you can point to this post and have a good laugh.

With that in mind, I don’t see ray tracing as being particularly interesting for games. I could make arguments that rasterization* is significantly more effecient, and will keep moving the bar each time ray tracing catches up. I could argue that “tricks” like environment mapping, shadow mapping, deferred rendering, and SSAO have continued to move effects into the rasterization space that we would have thought to be ray-tracing-only. (Heck, ray tracing doesn’t even do ambient occlusion particularly well unless you are willing to burn truly insane amounts of computing power.) I could argue that there is a networking effect: GPU vendors make rasterization faster because games use it, and games use it because the GPU makers have made it fast. That’s a hard cycle to break with a totally different technology.

I don’t really have the stature in the world of computer graphics to say such things. Fortunately John Carmack does. Read what he has to say. I think he’s spot on in pointing out that rasterization has fundamental efficiencies over ray tracing, and ray tracing doesn’t offer enough real usefulness to overcome the efficiency gap and the established media pipe-line.

The interview is from 2008; a few months ago Intel announced that first-generation Larrabee hardware wouldn’t be video cards at all. For all effective purposes from a game/flight simulator perspective, they basically never shipped. So as you read Carmack’s contents re: Intel, you can have a good chuckle that Intels claims have proven hollow due to the lack of actual hardware to run on.

I will be happy to be proven wrong by ray tracing, or any other awesome new technology. But I am by disposition skeptical until I see it running “for real”, e.g. in a real game that competes with modern games written via rasterization. Recoding old games or showing tech demos doesn’t convince me, because you can recode an old game even if your throughput is 1/20th of rasterization, and you can hide a lot of sins in a tech demo.

Heck, while I’m putting my foot in my mouth, here’s another one: unlimited detail. Any time someone announces the death of the triangle, I become skeptical. And their claim of processing “unlimited point cloud data in real time” strikes me as an over-simplification. Perhaps they can create a smooth level of detail experience with excellent paging characteristics (which is great!) but the detail isn’t unlimited. The data is limited by your input data source, your production system, the limits of your hardware, etc. Those are the same limits that a mesh LOD system has now. In other words, what they are doing may be significantly more efficient, but they haven’t made the impossible possible.

That is my general complaint with most of the “anti-rasterization” claims – they assume that mesh/rasterization systems are coded by stupid people – and yet most of the interesting algorithms for rasterization, like shadow mapping and SSAO, are quite clever. Consider these images: saying that rasterization doesn’t produce nice images while showing Half Life 2 (2004, for the X-Box 360) is like saying that cars are not fuel efficient because a 1963 Cadillac got 8 mpg. The infinite detail sample images show a lot of repeated geometry, something that renderers today already do very well, if that’s what was desirable (which it isn’t).

Finally, is in favor of sparse voxel octrees (SVOs). SVOs strike me as the most probable of the various non-mesh-rasterization ideas floating around, and an idea that might be useful for flight simulators in some cases. To me what makes SVOs practical (and in defense of the unlimited detail folks, their algorithm potentially does this too) is that it can be mix-and-matched with existing rasterization technology, so that you only pay for the new tech where it does you some good.

* Rasterization is the process of drawing on the screen by filling in the pixels covered by a triangle with some shading.

I’ll take a break from iPad drivel for a few posts; at least one or two of you don’t already own one. (Seriously, it’s simply easier to blog about X-Plane for iPad because it is already released; a lot of cool things for the desktop are still in develpment.)

In response to my comments on water reflections in X-Plane 950, some users brought up ray tracing.

My immediate thought is: I will start to think more seriously about ray tracing once it becomes the main technology behind first person shooters (FPS).

Improvements in rendering technology come to FPS before flight simulators (and this is true for the combat sims and MSFS series too, not just us). Global shadows, deferred rendering, screens-space ambient occlusion…the cool new tricks get tried out on FPS; by the time they make it into a flight simulator the technology has moved from “clever idea” to “standard issue.”

Consider that X-Plane now finally has per-pixel lighting. Why didn’t we have it when the FPS first did? Well, one reason is that the FPS were cheating. If you look at the papers suggesting how to program per-pixel lighting, at the time there were all sorts of clever techniques involving baking specular reflections into cube maps and other such work-arounds to improve performance. These were necessary because titles at the time were doing per-pixel lighting on hardware that could barely handle it. X-Plane’s approach (as well as other modern games) is to simply program per pixel lighting and trust that your GeForce 8800 or Radeon 4870 has plenty of shader power.

I believe that the reason for the gap between FPS and flight simulators come from two sources:

-

Viewpoint. You can put the camera quite literally anywhere in a flight simulator, and thus the world needs to look good from virtually any position. By comparison, if your game involves a six foot player walking on the ground (and sometimes jumping 10 feet in the air) you know a lot about what the user will never see, and you can pull a lot of tricks to reduce the performance cost of your world based on this knowledge. (This kind of optimization applies to racing games too.

To give one simple example of the kind of optimization a shooter can make that a flight simulator cannot, consider “portal culling”. A portal-culled world is one where the visibility of distinct regions have been precomputed. A trivial example is a house. Each room is only visible through the doors of the other rooms.* Thus when you are walking through a room, virtually no other room is being drawn at all. The entire world is only 20 by 20 meters. Thus the developers know that they have the entire hardware “budget” of computing power to dedicate to that one room and can load it up with effects, even if they are still expensive.

(A further advantage of portal culling is a balance of effects. Because rooms are not drawn together in arbitrary combinations, the developers may find ways to cheat on the lighting or shadowing effects, and they know nothing will “clutter” the world and ruin the cheats.)**

-

Often the FPS will have pre-built content, rather than user-configurable content. Schemes like portal culling (above) only work when you know everything about the world ahead of time and can calculate what is visible where. The same goes for many careful cheats on visual effects.

But a flight simulator is more like a platform: users add content from lots of different sources, and the flight simulator rendering engine has to be able to render an effect correctly no matter what the input. This means the scope of cheating is a lot smaller.

Consider for example water reflections. In a title with pre-made content, the artists can go into the world in advance and mark items as “reflects”, “doesn’t reflect”, reducing the amount of drawing necessary for water reflections. The artist simply has to look around the world and say “ah – this mountain is no where near a lake – no one will notice it.”

X-Plane can’t make this optimization. We have no idea where there will be water, or airports, or you might be flying, or where there might be another multiplayer plane. We know nothing. Everything is subject to change with custom scenery. So we can’t cheat – we have to do a lot of work for reflections, some of which might be wasted. (But it would be too expensive in CPU power to figure out what is wasted while flying.)

Putting it all together, my commentary on ray-tracing is this: the FPS will be able to integrate small amounts of ray tracing first, because they will be in a position to deploy it tactically, using it only where it is really necessary, in hybrid ray-trace + rasterized engines. They’ll be able to exclude big parts of the scene from the ray tracing pass, improving performance. They’ll be able to “dumb down” the quality of the ray trace in ways that you can’t see, again improving performance. The result of all of this will be some ray tracing in FPS when the hardware is just barely ready.

For a flight simulator, it will take longer, because we’ll need hardware that can do a lot more ray tracing work. We won’t know as much about our world, which comes from third party content, so we won’t be able to eliminate visually unimportant ray traces. Like deferred rendering, shadow mapping, SSAO and a number of other effects, flight simulators will need more computing power to apply the effects to a world that can be modified by users.

(Is ray tracing even useful, compared to rasterization? I have no prediction. Personally I am not excited by it, but fortunately I don’t have to make a good guess as to whether it is the future of flight simulation. The FPS will be able to, by effective cheating, apply ray tracing way before us, and give us a sneak peak into what might be possible.)

* There never are very many windows in those first person shooters, are there?

** To be clear: there is nothing negative about the term “cheat” in computer graphics. A way to cheat on the cost of an algorithm means the developers are very good at their jobs! “Cheating” on the cost of algorithm means more efficient rendering. If the term cheating seems negative, substitute “lossy optimization”.

OS X 10.6.3 is out. Besides adding a bunch of OpenGL extensions*, it looks like vertex performance is improved on nVidia hardware. My quick tests compare 10.5.8 to 10.6.3 (since I no longer have a 10.6.2 partition) and show a 15-30% improvement. If you have 10.5 and an 8800 you may want to consider updating your OS.

I also discovered that –fps_test=3 produces unreliable results because…wait for it…the deer and birds are randomized. If they show up during the fps test, you get hit with a performance penalty. I am working to correct this and may have to recut the time demo to work around this behavior.

If you are trying to time the sim via –fps_test=3, I suggest running the test multiple times – you should see “fast” runs and “slow” runs depending on our feathered and four-legged friends.

Phoronix reported a performance penalty with the new update; I do not know the cause of this or whether the fps_test=3 bug could be causing it. But their test setup is very different than mine – a GeForce 9400 on a big screen, which really tests shading power. My setup (an 8800 on a small screen) tests vertex throughput, since that has been my main concern with NV drivers.

My suggestion is to use –fps_test=2 if you want to differential 10.6.2 vs. 10.6.3. I’ll try to run some additional bench-marks soon!

EDIT: Follow-up. I set the X-Plane 945 time demo to 2560 x 1024, 16x FSAA, and all shaders on (e.g. let’s use some fill rate). I put the Cirrus jet on runway 8 at LOWI, then set paused forward full screen no HUD. In this configuration, I see these results:

Objects 10.5.8 10.6.3

none 85 fps 100 fps

a lot 46 fps 61 fps

tons 37 fps 42 fps

Note that in the “no objects” case the sim is fill-rate bound – in the other two it is vertex bound. So it looks to me like 10.6.3 is faster than 10.5.8 for both CPU use/object throughput and perhaps fill rate (or at least, fill-rate heavy cases don’t appear to be worse).

* These extensions represent Apple and the graphics card company creating software interface to fully unlock the graphics card’s abilities.

This isn’t supposed to be a coding blog, but users do ask about DirectX vs. OpenGL, or sometimes start fights in the forums about which is better (and yes, my dad can beat up your dad!). In past posts I have tried to explain the relationship between OpenGL and DirectX and the effect of OpenGL versions on X-Plane.

At the Game Developers Conference 2010 OpenGL 4.0 was announced, and it looks to me like the released the OpenGL 3.3 specs at almost exactly the same time. So…is there anything interesting here?

A Quick Response

In understanding OpenGL 4.0, let’s keep in mind how OpenGL works: OpenGL gains new capabilities by extensions. This is like a new item appearing on a menu at your favorite restaurant. Today we have two new specials: pickles in cream sauce, and fried potatoes. Fortunately, you don’t have to order everything on the menu.

So what is OpenGL 4.0? It’s a collection of extensions: if an implementation has all of them it can call itself 4.0. An application might not care. If we only want 2 of the 4 extensions, we’re just going to look for those 2 extensions, not sweat what “version number” we have.

Now go back to OpenGL 3.0, and DirectX 10. When DX10 and the GeForce 8800 came out, nVidia published a series of OpenGL extensions that allowed OpenGL applications to use “cool DirectX 10 tricks”. The problem was: the extensions were all NVidia specific tricks. After a fairly long time, OpenGL’s architectural review board (ARB) picked up the specs, and eventually most of them made it into OpenGL 3.0 and 3.1. The process was very slow and very drawn out, with some of these “cool DirectX 10 tricks” only making it into “official” OpenGL now.

If there were OpenGL extensions for DirectX 10, who cares that the ARB was so slow to adopt these standards proposed by NVidia? Well, I do. If NVidia proposes an extension and then ATI proposes a different extension and the ARB doesn’t come up with a unified official extension, then application like X-Plane have to have different code for different video cards. Our work-load doubles, and we can only put in half as many new cool features. Applications like X-Plane depend on unity among the vendors, via the ARB making “official” extensions.

So the most interesting thing about OpenGL 4.0 is how quickly they* made official ARB extensions for OpenGL that match DirectX 11’s capabilities. (NVidia hasn’t even managed to ship a DirectX 11 card yet, ATI’s HD5000 series has only been out for a few months, and OpenGL already has a spec.) OpenGL 4.0 exposes pretty much everything that is interesting in DirectX 11. By having official ARB extensions, developers like Laminar Research now know how we will take advantage of these new cards as we plan new features.

Things I Like

So are any of the new OpenGL 3.3 and 4.0 capabilities interesting? Well, there are three I like:

-

Dual-source blending. It is way beyond this blog to explain what this is or why anyone would care, and it won’t show up as a new OBJ ATTRibute or anything. But this extension does make it possible to optimize some bottlenecks in the internal rendering engine.

-

Instancing. Instancing is the ability to draw a mesh more than one time (with slight variants in each copy) with only one instruction to the graphics card. Since many games (like X-Plane) are limited in their ability to use the CPU to talk to the graphics card (we are “CPU bound” when rendering) the ability to ask for more work with fewer requests is a huge win.

There are a number of different ways to program “instancing” with OpenGL, but this particular extension is the one we prefer. It is not available on NVidia cards right now. So it’s nice to see it make it into the core spec – this is a signal that this particular draw path is considered important and will get attention.

-

The biggest feature in OpenGL 4.0 (and DirectX 11) is tessellation. Tessellation is the ability for the graphics card to turn a crude mesh with a few triangles into a detailed mesh with lots of triangles. You can see ATI demoing this capability here.

There are a lot of other extensions that make up OpenGL 3.3 and 4.0 but those are the big three for us.

* who is “they ” for OpenGL? Well, it’s the architectural review board (ARB) and the Khronos group, but in practice these groups are made up of employees from NVidia, ATI, Apple, Intel, and other companies, so it’s really a collective of people involved in OpenGL use. There’s a lot of input from hardware vendors, but if you read the OpenGL extensions, you’ll sometimes see game development studios get involved; Transgaming and Blizzard show up every now and then.

I’ve been working on a conformance test for X-Plane. The idea is simple, and not at all mine: X-Plane 945 can output a series of test images that are the same on each run. The images cover a variety of rendering conditions. If a video driver is broken, the images will be corrupted.

You can learn more about how this works here: I am working on the 945 timedemo tarball now.

The main driver for this is to help NVidia, ATI, and Apple to integrate X-Plane into their dedicated testing. With X-Plane as part of their test systems, they can catch driver bugs the easy way – the day after the code is changed, rather than months later after a series of angry web posts. X-plane 945 includes a number of new features as part of its framerate test to help with this process.

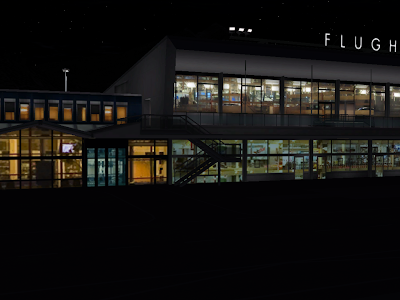

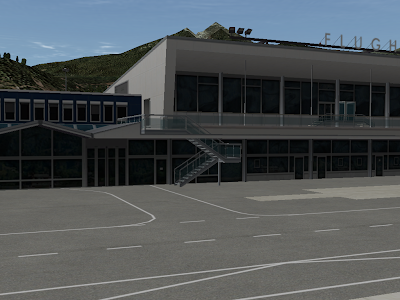

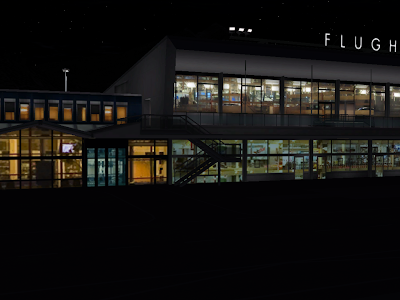

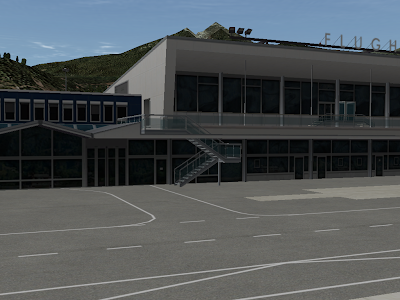

My hope is that this will benefit users (who will see less bugs) and the driver writers (who can get feedback on code changes in a uniform and reproducible manner). Here are the eight images in the sample conformance test I wrote, based on the LOWI custom airport scenery.

I’ve been on the road a lot for work, so my apologies to everyone whose email I am sitting on. Most of my time these days is being spent on new next-gen tech. But there are a few things I’m hoping to get done in the short term:

-

Cut a new time-demo test. This might seem like a low priority item, but it’s not. Apple, ATI and NVidia all run continuous automatic tests of their video drivers, with many applications and games. They have rooms full of computers that continuously run through 3 minute sections of Quake and Call of Duty, etc. If they introduce a driver bug while doing new development, these machines catch the problem immediately.

The new time demo (based on 945) will have a number of features to make X-Plane a more useful test case. If we can make X-Plane into a test case, then they can catch bugs early, and that means you don’t have to see them.

-

Bring WED 1.1 to beta. The only thing holding it back is the DSF exporter, and I did have about two hours to poke at it last week. I’m hoping if I can find just a few more hours, I can finish off the exporter.

-

Examine 950 bugs. I have half a dozen bug reports against 950 beta 1. 950 will be a small beta but also a slow one, because Austin and I have a lot of other things on our plates. If you haven’t heard back from me on a bug report, probably it’s still on my to-do list.

We’ll see how much of that I can get to in the next week.

The file loading code in 950 beta 1 for Windows is slower than 945. Sometimes. This will be “fixed” in beta 2. Here’s what happened:

The scenery system uses a number of small files. .ter files, multiple images, .objs, etc. This didn’t seem like a problem at first, and having everything in separate text files makes it easier to take apart a scenery pack and see what’s going on.

The problem is that as computers get bigger and faster, rather than a scenery pack growing bigger files, they are growing more files. The maximum texture size has doubled from 1024×1024 to 2048×2048. But with paged orthophotos, multicore, and a lot of VRAM, you could easily build a scenery pack with 10,000 images per DSF.

That’s exactly what people are doing, and the problem is that loading all of those tiny files is slow. Your hard drive is the ultimate example of “cheaper by the dozen” – it can load a single huge file at a high sustained data rate. But the combination of opening and closing files and jumping between them is horribly inefficient. 10,000 tiny .ter files is a hard drive’s worse nightmare.

In 950 beta 1 I tried to rewrite part of the low level file code to be quicker on Windows. It appeared to run 20% faster on my test of the LOWI demo area, so I left it in beta 1, only to find out later that it was about 100% slower on huge orthophoto scenery packs. I will be removing these “optimizations” in beta 2 to get back to the same speed we had before. (None of this affects Mac/Linux – the change was only for Windows.)

The long term solution (which we may have some day) is to have some kind of “packing” format to bundle up a number of small files so that X-Plane can read them more efficiently. An uncompressed zip file (that is, a zip where the actual contents aren’t compressed, just strung together) is one possible candidate – it would be easy for authors to work with and get the job done.

In the short term, for 950 beta 2, I am experimenting with code that loads only a fraction of the paged orthophoto textures ahead of time – this means that some (hopefully far away part) of the scenery will be “gray” until loaded, but the load time could be cut in half.

There is one thing you can do if you are making an orthophoto scenery pack: use the biggest textures you can. Not only is it good from a rendering perspective (fewer, larger textures means less CPU work telling the video card “it’s time to change textures”) but it’s good for loading too – fewer larger textures means fewer, larger total files, which is good for your hard disk.

(Thanks to Cam and Eric for doing heavy performance testing on some of the 950 beta builds!)

This blog post is for amateur plugin developers. By amateur I mean: some plugin developers are professional programmers by day, and are already familiar with all aspects of the software development progress. For those developers, the SDK is unsurprising and performance is simply a matter of applying standard practice: locate the worst performance problem, fix it, wash-rinse-repeat.

But we also have a dedicated set of amateur plugin developers – whether they had programming experience before as hobbyists, or learned C to take their add-ons to the next level, this group is very dedicated, but doesn’t have the years of professional experience to draw on.

If you’re in that second group, this post is for you. Explaining how to performance tune code is well beyond the scope of a blog post, but I do want to address some fundamental ideas.

I receive a number of questions about plugin performance (to which the answer is always “that’s not going to cause a performance problem”). It is understandable that programmers would be concerned about performance; X-Plane is a high performance environment, and a plugin that wrecks that will be rejected by users. But how do you go from worrying about performance to fixing it?

Measure, Measure, Measure, Measure.

If I had to go crazy and recite a sweaty and embarrassing mantra about performance tuning so that I could be humiliated on YouTube it would go: measure, measure, measure, measure.

If you want your plugin to be fast, the single most important thing to know is: you have to find performance problems by measurement, not by speculation, guessing or logic.

If you are unfamiliar with a problem domain (which means you are writing new code or a new algorithm – that is, doing something interesting), there is no way you are going to make a good guess as to where a performance problem is.

If you have a ton of experience in a domain, you still shouldn’t be guessing! After 5 years of working on X-Plane, I can make some good guesses as to where performance problems should be. But I only use those guesses to search in the likely places first! Even with good guesses, I rely on measurement and observation to make sure my guess wasn’t stupid. And even after 5 years of working on the rendering engine, my guesses are wrong more often than they are right. That’s just how performance tuning is: it’s really hard for us to guess where a performance problem might be.*

Fortunately, the most important thing to do, measuring real performance problems, is also the easiest, and requires no special tools. The number one way to check performance: remove the code in question! Simply remove your plugin and compare frame-rate. If removing the plugin does not improve fps, your plugin is not hurting fps.

It is very, very important to make frame-rate comparison measurements under equal conditions. If you measure with your plugin in the ocean and without your plugin at LOWI, the results are meaningless. Here’s a trick I use in X-Plane all the time: I set my new code to run only if the mouse is on the right half of the screen. That way I can be sitting at a fixed location, with the camera not moving, and by mousing around, I can very rapidly compare “with code”, “without code”. The camera doesn’t move, the flight model is doing the same thing – I have isolated just the routine in question. You can do the same thing in your plugin.

Understand Setup Vs. Execution

This is just a rule of thumb, and you cannot use this rule instead of measuring. But generally: libraries are organized so that “execution” code (doing stuff) is fast, while setup and cleanup code may not be. The SDK is definitely in this category. To give a few examples:

- Drawing with a texture in OpenGL is very fast. Loading up a texture is not fast.

- Reading a dataref is fast. Finding a dataref is not as fast.

- Opening a file is usually slower than reading a file.

- You can run a flight loop per frame without performance problems. But you should only register it once.

If you want to pick a general design pattern, separate setup from execution, and performance-tune them separately. You want things that happen all the time to be very fast, and you can be quite intolerant of performance problems in execution code. But if you have setup code in your execution code (e.g. you load your textures from disk during a draw callback) you are fighting the grain; the library you are using probably hasn’t tuned those setup calls to be as fast as the execution code.

Math And Logic Is Fast

Modern computers are astoundingly fast. If you are worried that doing a slightly more complex calculation will hurt frame-rate, don’t be. One of the most common questions about performance I get is: will my systems code slow down X-Plane. It probably won’t – the things you calculate in systems logic are trivial in computer-terms. (But – always measure, don’t just read my blog post!)

In order to have slow code you basically need one of two things:

- A loop. Once you start doing some math multiple times, it can add up. Adding numbers is fast. Adding numbers 4,000,000,000 times is not fast. It only takes one for-loop to make fast code slow.

- A sub-routine. The subroutine could be doing anything, including a loop. Once you start calling other people’s code, your code might get slow.

This is where the professionals have a certain edge: they know how much a set of standard computer operations “cost” in terms of performance. What really happens when you allocate a block of memory? Open a file? If you understand everything going on to make those things happen, you can have a good idea of how expensive they are.

Fortunately, you don’t need to know. You need to measure!

SDK Callbacks Are Fast (Enough)

The SDK’s XPLM library serves as a mediator between plugins and X-Plane. Fortunately, the mediation infrastructure is reasonably fast. Mediation includes things like requesting a dataref from another plugin, or firing off a draw callback. This “callback” overhead contains no loops internally, and thus it is fast enough that you won’t have performance problems doing it correctly. One draw callback that runs every frame? Not a performance problem. Read a dataref? Not a performance problem. (Read a dataref 4,000,000 times inside a for-loop…well, that can be slow, as can anything!)

However you should be aware that some plugin routines “do work”. For example, XPLMDrawObject doesn’t just do mediation (into X-Plane), it actually draws the object. Calls that do “real work” do have the potential to be slower.

Be ware of one exception: a dataref read looks to you like a request for data. But really it happens in two parts. First the SDK makes a call into the other plugin that provides the data (often but not always X-Plane itself) and then that other plugin comes up with the data. So whenI say “dataref reads are fast” what I really mean is: the part of a dataref read that the SDK takes care of is fast. If the dataref read goes into a badly written plugin, the read could be very, very slow. All of the datarefs inside X-Plane vary from fast to very fast, but if you are reading data from another plugin, all bets are off.

Of course, all bets are off anyway. Did I mention you have to measure?

* Why can’t we guess? The answer is: abstraction. Basically well structured code uses libraries, functions, etc. to hide implementation and make the computer seem easier to work with. But because many challenging problems are hidden from view (which is a good thing) it’s hard to know how much real work is being done inside the black box. Build a black box out of black boxes, then do it again a few time, and the information about how fast a function is has bee

n obscured several times over!

There’s a slight performance win to be had by grouping taxiways by their surface type.

Now clearly if you have to have an “interlocked” pattern of asphalt on top of concrete, on top of asphalt, this isn’t an option.

But where you do have the flexibility to reorder, if you can group your work by surface type, X-Plane can sometimes cut down on the number of texture changes, which is good for framerate.

X-Plane will try to do this optimization for you, but X-Plane’s determination of “independent” taxiways (taxiways whose draw order can be swapped without a visual artifact) is a bit limited and can only catch simple cases.

For what it’s worth, interlocked patterns of surfaces were much more a problem with old X-Plane 6/7 type airport layouts, where the taxiways were sorted by size, and there could be hundreds of small pieces of pavement.

I have blogged in the past regarding the rendering settings in X-Plane, but this seems to come up periodically, so here we go again. Invariably someone asks the question: “what computer do I have to buy to run X-Plane with all of the sliders set to maximum?”

I now have an answer, in the form of a question: “How hungry do you have to be to clean your plate at an all-you-can-eat buffet?”

There is no amount of hungry that will ever be enough to eat all of the food at an all you can eat buffet – you can always ask for more. And when it comes to rendering settings and global scenery, X-Plane is (whenever possible) the same way. You can always set more traffic, more birds, more objects, more FSAA.

Now the all-you-can-eat buffet doesn’t have infinite amounts of food in the building – just enough that they know that they won’t run out. And X-Plane is the same way. There is a maximum if you set everything all the way up, but we try to make sure that no one is going to hit a point where they want more eye candy but they’ve maxed out the settings. Eat all you want, we’ve got more.

Why on earth would we set up X-Plane like this? The answer is choice.

If you go to an all you can eat buffet, you can fill up on nothing but potatos, or you can have five pieces of chicken. It’s up to you. X-Plane is the same way – you decide if you want objects to be visible farther away or more densely. Would you rather have roads or trees? Birds or high frame-rate? You decide!

Not everyone’s appetite is the same, and not everyone’s taste is the same. This is very true when it comes to flight simulation. There are huge variations in hardware capability, target framerate (some users don’t mind 20 fps, some demand 80 fps) and in what part of the visual experience people care about most (objects vs. FSAA vs. visibility distance, etc).

Given such a heterogeneous environment, the only way to meet the needs of a wide group of users is to present choice, and make sure that we have enough of everything.

So when you go to set the rendering settings, don’t think that setting objects to anything less than maximum is like only eating half the steak you bought at a steak-house. Rather, the rendering settings are like picking which food from the buffet makes it to your plate. You choose how much you want based on what you can consume, and you pick and choose what is most desirable to you. And like an all you can eat buffet, don’t eat too much – the results won’t be pleasant!