X-Plane SDK Roadmap | User Interface and Avionics

Time for an upgrade. Ben Supnik discloses the future of X-Plane Panel Rendering and UI SDKs, including some long-awaited requests!

Read More

Time for an upgrade. Ben Supnik discloses the future of X-Plane Panel Rendering and UI SDKs, including some long-awaited requests!

Read More

In this blog post, Ben reflects on the SDK enforcement in 12.4.0 and 12.4.1, and an update going forward…

Read MoreLast week Marco and I were on a live stream and had a chance to talk about some of the upcoming developments we’re working on; next month Marco will be full time with us, but for now here’s a few notes on things making progress in the lab. I’m sure publicly discussing them on Friday the 13th will have no unexpected consequences. (Knocks on wooden desk repeatedly.)

We should hopefully have a release candidate for 12.1.2 next week – this week the team was fixing crash bugs, and we’re down to trying to finishing up stability. Crash rates in the beta look good enough to ship. (Please consider turning on analytics – we don’t collect your personal data but we do record crashed vs non-crashed sim runs – this is how we know whether stability is getting better or worse!)

12.2.0

Dark Cockpits: Art team is evaluating Maya’s exposure fusion work. Generally things look better than the shipping product, but we discovered yesterday that the indirect lighting environment is pretty weird on cloudy days. We’ve been fixing bugs, removing old hacks we no longer need, and I’m optimistic that the update should be both better looking and closer to reality.

We also have some fixes to the PBR material model that may get X-Plane closer to Blender and Substance Painter 2. This is a double-edged sword: we don’t want to change the material model such that everyone has to repaint everything (that would be too much both for our team and for add-on makers) but we do want X-Plane to be as close to WYSIWYG possible to make development faster. Art is evaluating these changes too.

We’re targeting 12.2.0 (a “major free update”) for these changes so we can run a substantial alpha and beta program and give add-on makers time to report bugs.

12.1.X

ATC, Weather: we have two minor releases before 12.2.0 making their way through testing. The first is ATC with SIDs and STARs – as of now it looks like that will ship next, but all releases are subject to change based on bugs.*

The other update is a weather update, and it looks like it may have some substantial features:

As of now the weather branch also has network sync of trucks and jetways to external visuals. This only affects users using external visuals but for those users, it’s a big feature, and it’s also an important foundational technology for us.

* In the past we have announced “X” is next and if that code was buggy, we’d just delay shipping anything until we fixed it. We’re trying to be more flexible and ship what’s ready first, so good code doesn’t have to wait for unrelated bugs.

X-Plane 12.1.1 is out! This is a quick “bug fix” patch on X-Plane 12.1.0. If you could not load the sim due to problems with XPLM_64.dll, please update using the installer to fix it. Full notes here.

Besides fixing bugs, X-Plane 12.1.1 enables X-Plane’s web API*. Basically we were asked for this so many times at FSExpo that Chris and Daniela put the remaining work on the fast track.

Our plan is to build several services into the web server; the web APIs will not be exact mirrors of the plugin SDK, but will cover enough functionality to build wide range of apps. We have plans for:

Documentation on the new web APIs can be found here.

X-Plane alum and fellow X-Plane-10-survivor Tom Kyler is back working on developer documentation for us, and has finished a first release on some of the new X-Plane 12.1.0 features:

Tom has big plans for X-Plane’s documentation; one thing that’s great to see in these docs is really good illustrations based on real sample 3-d models and test projects.

A detail texture is a repeating, high detail pattern that adds high-res detail to an otherwise lower resolution texture. Detail textures let us add fine detail at high resolution without using a ton of extra VRAM efficiently. We use detail textures with bits of “gravel” to make the runways look more realistic.

We introduced detail textures over a decade ago in X-Plane 10 to enhance ground detail around airports and in the new (at the time) autogen cities.

What we did not do was give them a good name. At the time we called them “decal” textures. This has turned out to be incredibly confusing, because in every other game engine ever, a high-res texture applied over the entire material (which is what we have) is called a detail, and a decal basically means “sticker that you stick on somewhere”. Detail textures make the ground look more rocky, decals add blood spatter and bullet holes to the walls in your zombie-shooter game.

So moving forward, we are going to try to call detail textures “detail textures” wherever possible, to be consistent with the norms of content creation. Tom and Maya will be updating labels in Blender and doc to be consistent about this.

For X-Plane 12.1.1, Maya has extended the detailing system in two ways:

You can get the 4.3.3 beta XPlane2Blender plugin here to use the new detail textures. Maya is planning on a release Real Soon Now™.

* Please note that while the dataref API does use websockets, most of our web APIs are conventional REST requests over HTTP. I did consider “The X-Plane developers never REST..until now” as a section title.

The X-Plane 12.1 release candidate is out today!* The last twenty four hours have been a mad dash to get the release candidate posted before we head to Las Vegas for FlightSimExpo 2024. If you are attending, stop by the X-Plane booth – there will be a bunch of us there including myself, Austin, and Marco, our new release manager.

All of this is a little bit exciting because we can’t recut the release candidate while in Las Vegas – one of the machines involved still requires a human to sit in front of it. We’ve been racing to get the RC done while we still have access to those machines, and the build system responded as you’d expect – by failing in all sorts of new ways. My plan is to just not breathe until I’m on the plane tomorrow.

(*) Because this is a release candidate you still have to check “get betas” in the installer to opt in to getting the RC; once the candidate has been out for a week, if nothing huge goes wrong, we will declare it final and it will become the official update for everyone.

If you’re at FSWeekend, say hey to our peeps there! In the meantime, we’re working to kill off the last 12.1.0 features. A few internal pics* from killing off the last features:

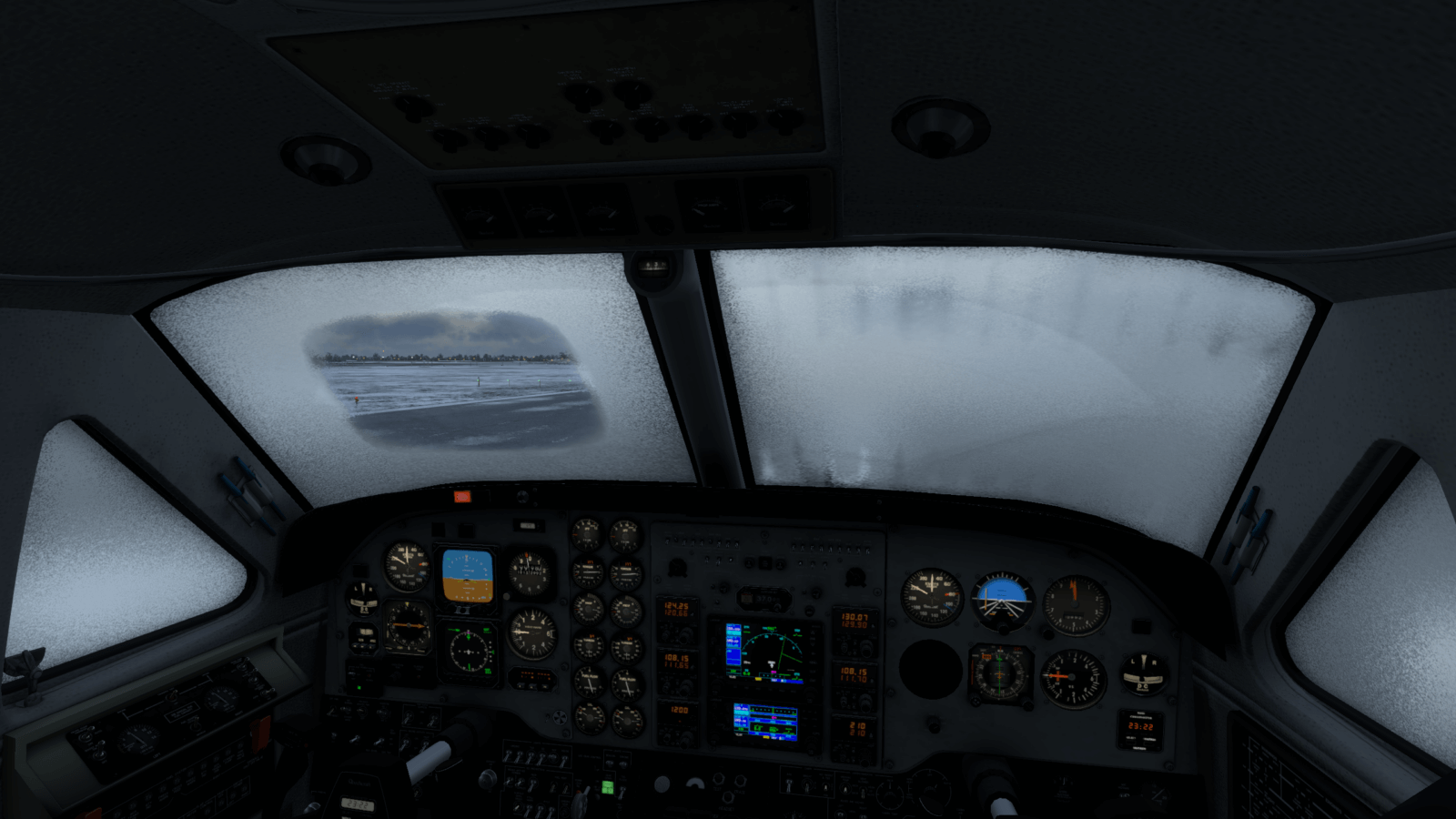

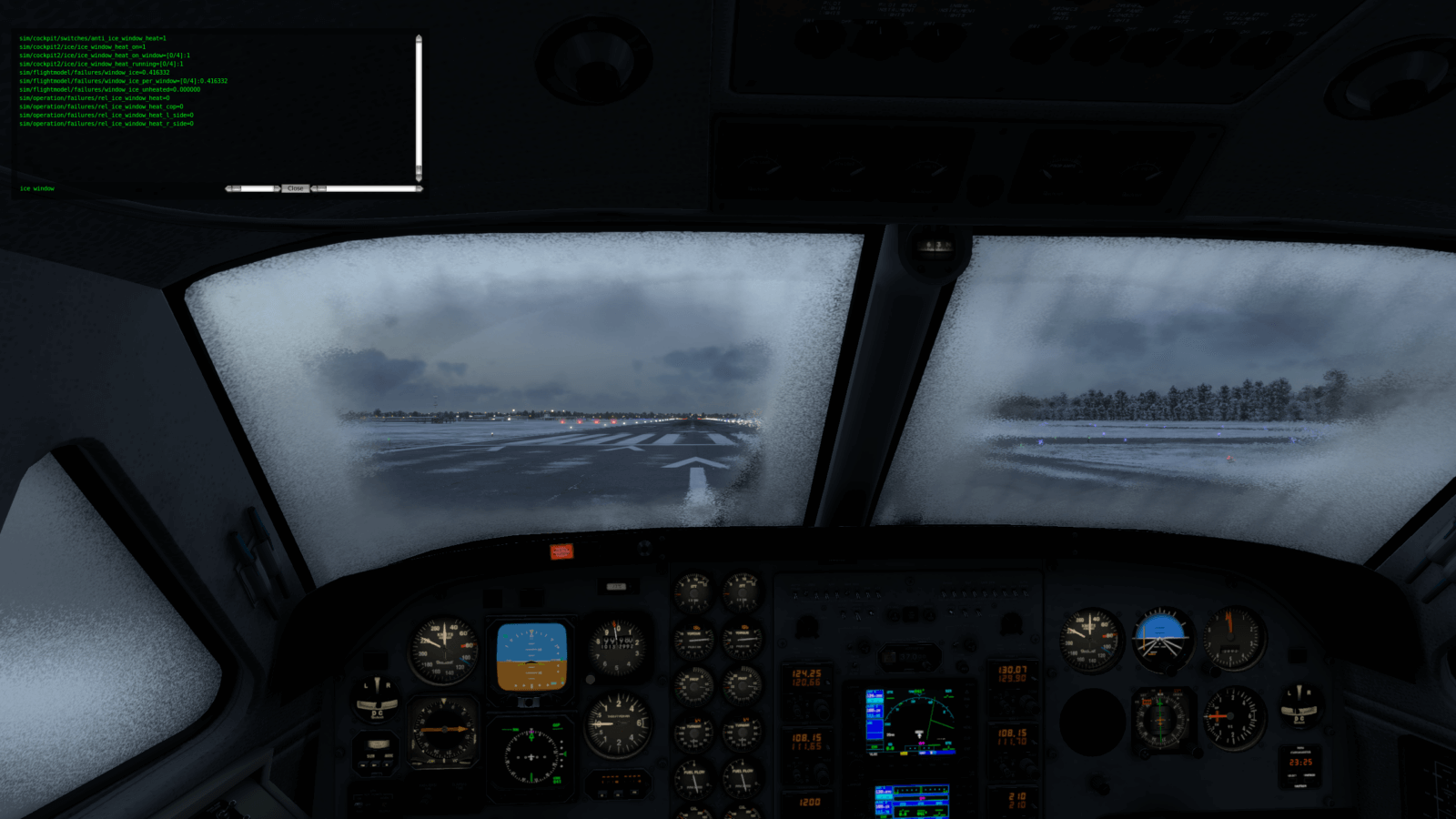

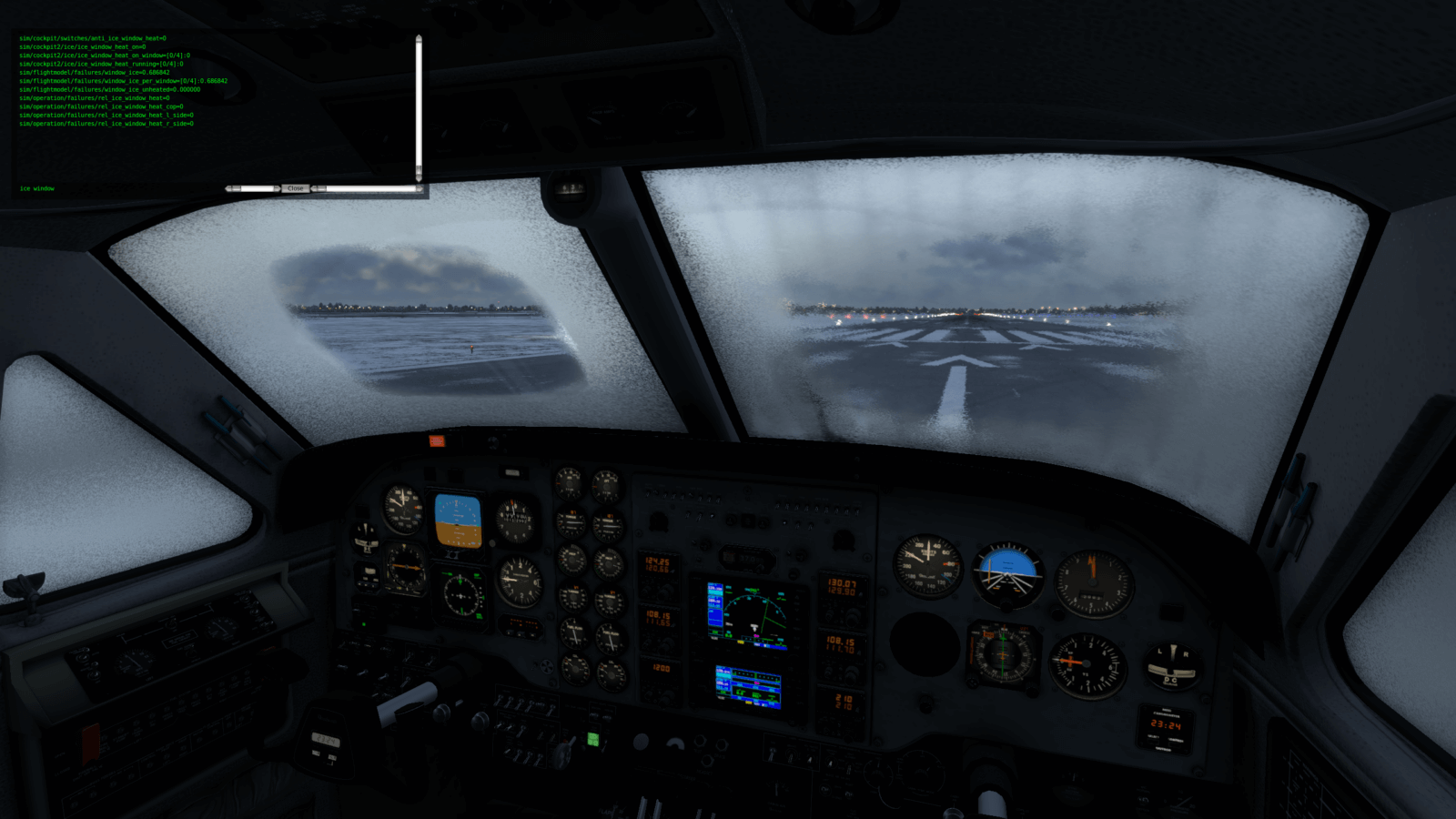

Author-controlled de-icing has had a series of bugs in 12.0.x. For 12.1.0 we’ve gone over it with a fine tooth comb; Alex ran the above tests with our Kingair, which has the unusual case of two overlapping de-icing zones. We’ll have updated docs, Blender exporter support, and builds for authors.

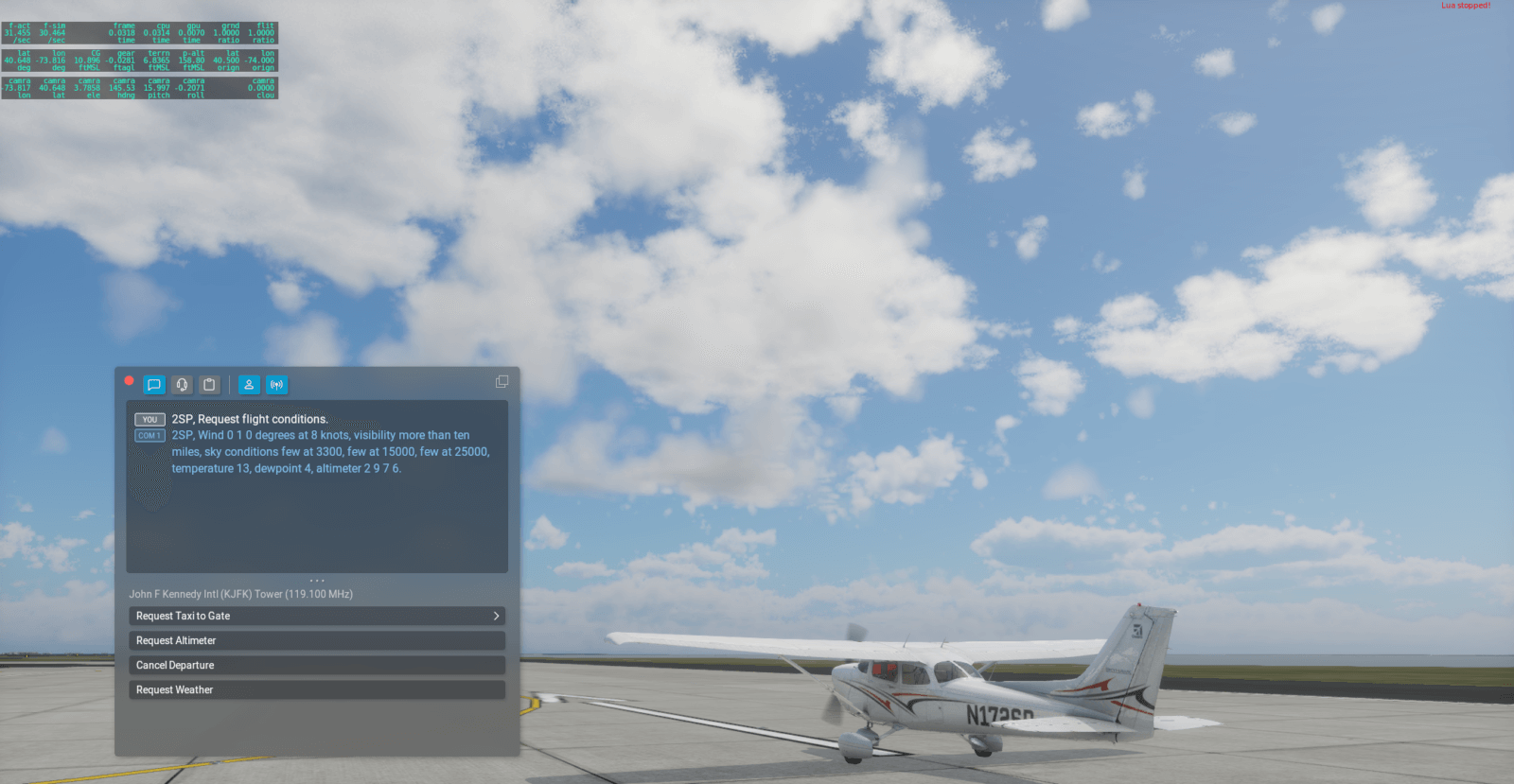

Some tests of real weather. In the first pic, the sky says clear but there’s a distant cloud…because the weather report is local.

Water turbidity is fixed for 12.1.0 and I am finishing documentation for authors. It looks like Oscar’s work on Ortho4XP is on GitHub. Please note that X-Plane 12.1.0 does not improve X-Plane 11 orthophoto water compatibility; v11 packs will only have 2-d water because their meshes are not triangulated properly for 3-d waves.

Based on the progress we done this week, I am hoping we will be able to test these features next week and start a private alpha in our developer lobby.

* Please note: these are internal pics from the developers that I am posting while the marketing team is too far away to object – literally what we were passing around while discussing the features. Don’t panic over FPS; the sim is running a debug build in those real weather pics, for example.

In my previous post I drew an analogy between a scenery system with its file formats and a turtle within its shell. We are limited by DSF, so we are making a new file format for base meshes so we and all add-on developers can expand the scope of our data and make better scenery for X-Plane.

The really big change we are making to base meshes is to go from a vector-centric to a raster-centric format. Let’s break that down and define what that means.

Vectors are fancy computer-graphics talk for lines defined by their mathematical end-points. (Pro tip: if you want to be a graphics expert, you just need the right big words. Try putting the word anisotropic in front of everything, people will think you just came from SIGGRAPH!) DSF started as an entirely-vector format:

This isn’t the only thing DSF does – we added raster capabilities and there is e.g. raster sound and season data in X-Plane 12, but DSF is fundamentally about vector data – saying where the edges of things go exactly.

This was great for a while, but now that we have more and more vector data (complex coastlines, complex road grids, complex building footprints) the DSFs are getting too big and slow for X-Plane.

Raster data is any data stored in a 2-d grid. This includes images (which in turn includes orthophotos) but it also includes 2-d height maps (DEMs), and the 2-d raster data we include in DSF now (e.g. sound raster data, season raster data etc). Any time we store numbers that mean something in a 2-d array, we have raster data.

Raster data has several advantages over vectors:

Twenty years ago, when I first worked on DSF, computers didn’t have the capacity to use lots of raster data – this was back when 8 MB of VRAM was “a lot”. But now we no longer need to depend on vectors for space savings.

Raster tiles are raster data broken into smaller tiles that get pieced together. Raster tiles have become the standard way to view GIS data – if you’ve used Apple Maps or Google Earth or OpenStreetMap or any of the map layers in WED, you’ve used raster tiles.

Raster tiles have a bunch of advantages too:

So our plan for the next-generation base mesh is “all raster tiles, all the time” – we’d like to have elevation data, land/water data, vegetation location data, as well as material colors all in raster tile form. This would get us much better LOD/streaming characteristics but also provide a very simple way for custom scenery packs to override specific parts of the mesh at variable resolution with full control.

Raster tiles are not the same thing as orthophotos. A raster tile is any data contained in a 2-d array, not just image data cut into squares (e.g. orthophotos). So while a raster-tile system may make it easier to build orthophoto scenery, it does not mean that the scenery can only be orthophotos.

Thomson and Dellanie posted a preview of what’s coming in X-Plane 12.1 – click over to the news blog to see the pretty pictures. Short story long, it’s a graphics-intensive release, but it’s also a big release, with weather, systems, avionics and ATC updates too. What follows here is a few details for developers.

As with all past X-plane 12 patches, we are planning to do a private “alpha build” test run with the developer lobby before public beta. We do this both to find out about add-on compatibility and to get the worst bugs fixed with a smaller test group. As of this writing, ATC is at the end of bug fixes, the last graphics changes are being tested, but my weather work is still mid-development.

This release turned out to have a lot for scenery developers:

The entire DECAL system (existing and new normal map decals) are also usable in OBJs for aircraft as well, and the particle system has received some upgrades that can be used everywhere.

One warning: the old “smoke puff” directive for OBJs from X-Plane 8 is now inoperative; with 12.1.0 we finally removed the old particle system completely. I suggest using the new particle system as it will give you real control over what kind of smoke comes out of your models.

Two new plugin APIs are planned for 12.1.0:

We are still putting finishing touches on the avionics APIs now, but this tech is very close to complete, and definitely usable.

Unfortunately we will not have a low level weather API in 12.1.0 – R&D on this is ongoing, but at least in better shape than it was before due to fixes to the internal weather code.

At the X-Plane Developer Conference in Montreal this year I gave a presentation sharing my thinking on our next-gen scenery system. This has created a lot of interest but also a lot of confusion. So in these next two blog posts I want to start by clarifying two fundamental ideas about scenery.

Here’s the key point for the first one:

A new scenery format is not the same as new scenery.

This can be confusing because we haven’t changed either our scenery file formats or our scenery in quite a while, and often the two change together. Let’s break this down.

A scenery file format is the way we represent scenery in our simulator. It consists of several things:

My first work for Laminar Research (two decades ago) was building all of those things: I invented DSF files, wrote the DSF reader inside X-Plane (DSFLib), worked on the rendering engine, and created the tools to write the files (DSFTool).

When we talk about just the scenery, this is the final rendered files that people fly with. Remember when we shipped 60GB worth of content in 12.0.9? That was new scenery rendered out with all the latest and greatest data.

X-Plane ships with the “global scenery” – a set of about 18,000 DSFs that ensure land everywhere from 60S to 75N. But this is not the only scenery out there – there’s TrueEarth, HD base meshes, SimHeaven, and scenery made with Ortho4XP.

Lots of people can make scenery, often in many different ways (using land class, orthophotos, autogen, etc.) but all of that scenery must be in X-Plane’s scenery file format, e.g DSF.

The scenery is the turtle and the scenery file format is the shell. The scenery can only be as complex as there is capacity in that file format (shell).

So the first part of my talk was a tour of how we have outgrown DSF, and pointed out that there are some things that DSF can’t do. For example, several add-on makers want to stream custom scenery, but DSF makes that basically impossible. DSF also isn’t meant for really high detail vector data, so we’ve been having trouble using all of the latest OSM imports.

The second talk discussed our plans for a replacement to the base mesh file format, which is based on raster tiles. This part of the talk said nothing about what kind of scenery we (Laminar Research) would make, which raised a lot of questions.

But now that you understand the the turtle and the shell, you have a lens to understand what we’re saying. This wasn’t an announcement of next-scenery, only an announcement of a bigger shell that will make that next-gen scenery possible.

So the next-gen scenery format is all about potential. The scenery file format limits what is possible for all scenery (both what is built into the sim and add-ons), so we want to raise those limits quite a bit in the next-gen base-mesh format.

The way we are doing that is by moving the base mesh from a vector-centric approach to a raster-centric post; I’ll break that down in another post.

After the X-Plane Developer Conference in Montreal this weekend (thanks to everyone who came and especially ToLiss for hosting/making us feel totally at home in Montreal) I thought “I should probably post something to the dev blog talking about what we’re up to.” I logged in and saw…I haven’t posted anything in four months.

So that’s not great. The truth is the X-Plane team is larger than it was in the v10 days and I spend most of my communications time talking to the internal team and third party developers.

I’m going to try to post once a week here. This sentence may be an embarrassing monument to lofty but impractical goals in another four months, but putting it in writing is a way to commit to it, and it won’t be the most embarrassing thing I’ve ever done.

We announced the X-Plane Store plan in Montreal; Dellanie’s got a great FAQ there, but here for developers I just want to make two points clear:

We have two “big updates” planned right now:

Both releases will have a bunch of other stuff too; they’re big patches. I’m pointing to the graphics/FM divide because the decision to push one of graphics or physics in each patch is intentional to keep the scope of the beta under control.

We are aiming to get 12.1.0 into private testing this month.

Probably only three people on Earth care about this, but after a decade (more walk of shame) I was trolled into updating the .net file format specification. So if this is interest to you, I apologize both for the delay and for the pain you are about to suffer. The road art file format in X-Plane is incredibly complicated and I don’t actually recommend anyone try to hack it, but it’s also not meant to be a secret.

I am working to get water and orthophotos sorted out, hopefully for 12.1.0. We will also have a DSF recut (hopefully for 12.1.0 but maybe for 12.2.0) to address gateway airport boundaries and runway undulations.

In Montreal I discussed a little bit about the future of the X-Plane scenery system, but that’s complex enough to warrant another blog post.