[This post is a “behind the scenes” look at the tech that makes up the X-Plane massive multiplayer (MMO) server. It’s only going to be of interest to programming nerds—there are no takeaways here for plugin devs or sim pilots.]

[Update: If you’re interested in hearing more, I was on the ThinkingElixir podcast talking about this stuff.]

In mid-2020, we launched massive multiplayer on X-Plane Mobile. This broke a lot of new ground for us as an organization. We’ve had peer-to-peer multiplayer in the sim for a long time, but never server-hosted multiplayer. That meant there were a lot of technical decisions to make, with no constraints imposed by existing code.

Requirements

We had a few goals from the start:

- The server had to be rock solid. We didn’t want a tiny error processing some client update to bring down the whole server for everyone connected.

- We wanted a single shared world1. Functionally, this means the language/framework we chose would need to have a really good concurrency story, because it would need to scale to tens of thousands of concurrent pilots.

- We wanted quick iteration times. We couldn’t be sure how well MMO would be received by users, so we wanted the initial investment in it to be just enough to validate the idea.

- It needed to be fast. Multiplayer has a “soft real time” constraint, so we needed to be able to service all clients consistently and on time. (Quantitatively, this means our 99th percentile response times matter a lot more than the mean or median.)

Choosing a Language

From those requirements, we could draw a few immediate conclusions:

- The requirements for stability and fast iteration time ruled out C++ (or, God help us, C). Despite having a lot of institutional knowledge about those languages, they’re slower to develop in than modern “web” languages, and a single null pointer will bring down the entire system. (Ask me how I know. 😉 )

- The speed & scalability requirements ruled out a lot of modern web languages like Ruby, where the model for scaling up is generally “just throw more servers at it.” We didn’t want to (forever!) pay the development cost of synchronizing multiple machines across a data center—that’s a drag on both dev time and client latency.

This eventually led me to a few top contenders:

- Rust

- Go

- Elixir

Each of these languages has a solid concurrency story. Rust would probably be the fastest & most scalable, at the cost of developer productivity. But Elixir had one major thing that neither Rust nor Go could touch: fault tolerance built in to the very core of the platform.

Elixir has this concept of running code in lightweight, separate “processes.” These are emphatically not OS processes—under the hood, they’re just a data structure in Erlang/Elixir VM (called the BEAM). One of the core ideas of Elixir processes is that they’re expendable: a crash in one process doesn’t affect other processes, unless those processes explicitly depend on the crashing one. So, consider a process tree structured like this (apologies for my ASCII art):

UDP Server ______________________

/ | ... \ | Spatial Data Store 1 |

/ | \ ----------------------

/ | \ ______________________

/ | \ | Spatial Data Store 2 |

Client 1 Client 2 ... Client n ----------------------

...

______________________

| Spatial Data Store n |

----------------------

A crash in the Client 1 process affects only that client’s connection—not Client 2, nor the UDP server itself. Likewise, a crash in Data Store 1 (in our case, we partition the data in memory based on each plane’s spatial location) doesn’t affect the data in any other data store.

(Of course, a crash in the base UDP Server would still destroy all client connections—there’s no getting around that, so we try to minimize the work the UDP server itself does.)

This makes an Elixir server extremely fault tolerant. And that’s paid off for us in practice. In the last 30 days, we’ve had ~2,000 crashes in client connections (usually because of garbled UDP packets)—each of these required the client to reconnect behind the scenes, but it didn’t affect any other clients. To date, we’ve never had a crash high enough in the process tree to disconnect multiple clients or lose data, and I don’t really expect we will.

The other thing this process architecture makes possible is fair scheduling of clients against each other: if you have 10,000 clients, and one of them for whatever reason takes 10 seconds to process an update, that client won’t be allowed to bogart a hardware thread—it’ll be suspended after a few hundred milliseconds to schedule another process. That makes it a lot easier for us to keep response times consistent even in the face of unexpected issues in the wild.

The result of all this is that we can support thousands of clients on a single off-the-shelf cloud VM instance, with great reliability. Developer productivity has never been better, either—I went into this knowing zero Elixir, and by the time I had worked through the official Getting Started tutorial, I felt confident enough in the language to dive in.

Surprises with Elixir: The Bad

This wouldn’t be an honest post-mortem if I didn’t talk about the ways in which Elixir didn’t live up to its hype.

First, despite all the tools the Elixir ecosystem has to support multi-node distributed systems (i.e., a cluster of servers), this is never going to be easy if you need synchronization between them. I’m not aware of any platform that does it better, but this is something the Elixir community kind of oversells. Everybody wants to talk about multi-node clusters, but the reality is that (at least at our scale), we didn’t actually need multi-node support, and it would have been utterly foolish to pay the very high dev costs to build it in from the start. If we ever need to support orders of magnitude more concurrent pilots, we’ll do so by moving to a bare-metal, 64-core machine or something… not by spinning up dozens of 4-core VMs.

The same goes for zero downtime deployments. Again, the community loves to talk about this, and it is indeed really cool that the BEAM supports it. (I don’t know of any other plaform where this is possible!) But just like multi-node clusters, there’s a very high dev cost to making this work, and you probably don’t need it. In our case, we’re just doing blue/green deploys: we migrate client traffic from the old server to the new one when you start your next flight.

The biggest shortcoming we encountered in practice was with Elixir’s package ecosystem. To be fair to Elixir, it’s actually way more broad than reading comments on the internet had led me to believe, but it still doesn’t hold a candle to NPM or pip (both for better and for worse). This meant I had to implement the UDP protocol we use for game state sync (RakNet) from scratch2. That was time consuming, but not too terrible. (Of course, I come from the C++ world, where “implement it from scratch” is the default!)

The last pain point I had was with IDE integration. As somebody who uses JetBrains exclusively for all my development work (C++, Objective-C, Android, Python, PHP, Node.js, etc.), it pains me that there’s not a first-party Elixir IDE. The community intellij-elixir plugin is really good for a community plugin, but in no way will it make you think it’s natively supported. Booting up the debugger can take literally minutes on our project—the debugger is effectively useless, and I use test harnesses or logger debugging almost exclusively.

Surprises with Elixir: The Good

There were a few really amazing things I encountered in working with Elixir that I didn’t really expect from just reading about it on the internet.

- Elixir’s support for integrating with Python, C, and other languages that can talk to C wound up being really valuable. This is great for leveraging libraries written in other languages (though it’s not really suitable for use in our real-time updates due to the inherent cost of marshalling data between the two languages). This let me use a METAR parser written in Python from within Elixir, without having to do painful things like call a system process, ask the Python script to write to disk, then read from disk.

- Pattern matching (and more broadly, the general principle in functional languages of working on the “shape” of the data rather than explicit strong types) is intoxicating. It just leads to such simple, straightforward code! This is one of those things that once you experience it, you start conceiving of all programming problems in these terms, and it’s hard to go back to a language without it.

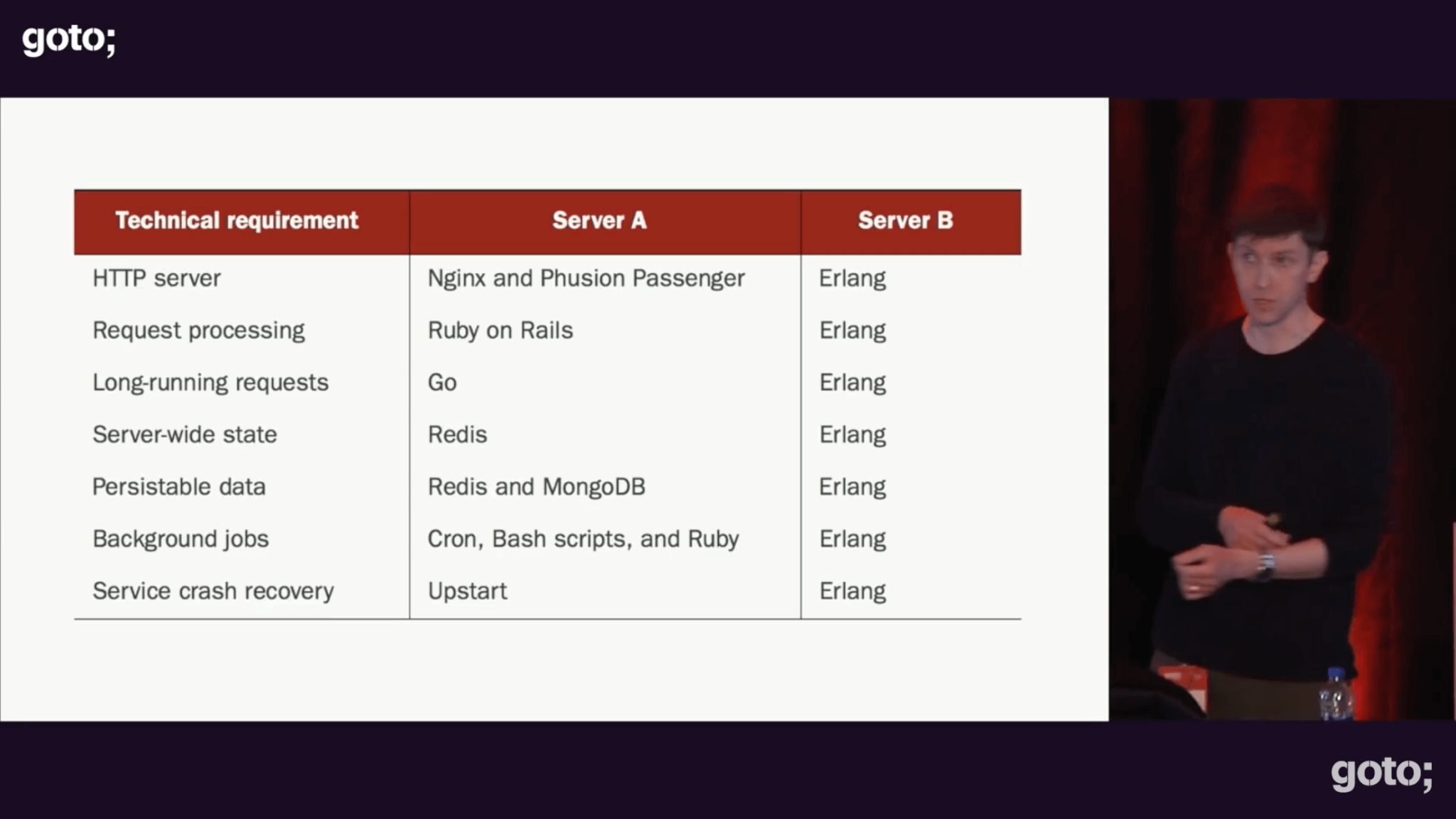

- It’s so nice to have an all-Elixir stack. I’ve written web apps in other languages (PHP, Node, a bit of Ruby), so I was very used to depending on external technologies for core functionality—HTTP servers, caching layers, Cron jobs, etc. This slide from Saša Jurić’s outstanding talk The Soul of Erlang and Elixir really sums up how Elixir can serve as a web service unto itself:

Now, to be clear, is Elixir’s version of these tools as fully featured as the alternative, standalone version? Probably not. But for X-Plane’s use case, we’ve not found any shortcomings, and without being an expert in Redis/Cron/PM2/whatever, I couldn’t actually tell you what Elixir’s version of this stuff is lacking. And that’s the point, really—instead of needing expertise in a bunch of different tools, you can learn one (i.e., Elixir) really well.

Now, to be clear, is Elixir’s version of these tools as fully featured as the alternative, standalone version? Probably not. But for X-Plane’s use case, we’ve not found any shortcomings, and without being an expert in Redis/Cron/PM2/whatever, I couldn’t actually tell you what Elixir’s version of this stuff is lacking. And that’s the point, really—instead of needing expertise in a bunch of different tools, you can learn one (i.e., Elixir) really well.

Want to Get Started with Elixir?

If all this is intriguing enough to make you want to dive into Elixir, I can recommend a few resources:

- The best place to start is the Saša Jurić talk linked above. This gives an overview of the philosophy of Elixir (and Erlang, which it’s built on). It’s a great introduction to the high-level concepts you’ll build everything else on top of.

- Next, go through the official Getting Started tutorial. I’ve never seen a language’s first-party documentation as good as Elixir’s. You could honestly read this alone and have enough knowledge to write production services.

- Saša Jurić’s book Elixir in Action. I’ve read most of this for a deeper dive into the language, and while it’s not necessarily required reading beyond the official tutorial, I did find it valuable.

Thoughts, questions, comments? You can drop them in the comments below, or hit me up on Twitter.

[1] Long term, we might actually want to split the world’s traffic into multiple servers (e.g., one for Europe, one for the Americas, etc.), since no amount of technical tricks can eliminate the latency of sending a packet from, say, Sydney to New York. For the initial release, though, we could deal with the latency, and we wanted the option of hosting tens of thousands of players on a single server.

[2] We recently open sourced the RakNet protocol implementation—you can find it in the X-Plane GitHub. The README gives a good overview of the full MMO server’s architecture, too: each client connection is a stateful Elixir process, acting asynchronously on a client state struct; clients asynchronously schedule themselves to send updates back to the user.

Very complete article. I love it how you show the good along with the bad and the surprises you guys found. This will do wonders for people that have been curious but never tried the language. Thanks for this.

[noting there’s elixir-mode in emacs…]

Enticing writeup, I think I have a project that might benefit. Thanks!

Even though every language/ecosystem has drawbacks, Elixir included, I can’t help but feel like the “bad” surprises were errors in judgement or a lack of proper research.

Even in the Elixir community most engineers state that you shouldn’t use distributed systems unless you really need them. Why? Because it’s hard. When you actually need it, then the way the BEAM works at least makes it much easier to do. If you can afford to have everything go down when that single machine fails, then great. Saves you a lot of problems. So Elixir makes distributed systems easier than most other languages, you didn’t even need a distributed system, yet because you had hyped expectations it could ever live up to it suddenly became a “bad” thing?

Zero downtime deployments are often mentioned but I’ve only seen it being recommended for things like IoT devices that should never go down. The general consensus seems to be; don’t use it unless you know you need it. Still, it’s a positive that it’s even possible.

The package ecosystem is one of the first things to check when using a new language. The amount of packages doesn’t mean a thing. Does it contain the packages you need? Are they have of required quality? Is it worth it to build certain functionality from scratch in case packages are not available? Often most of this can be answered by building a small prototype.

I’m all for mentioning drawbacks and trade-offs. It’s the first thing I look for when trying to decide if something is worth using.

I also believe we have to be honest about ourselves. We often “blame” the technology even though we didn’t do the proper research and didn’t validate our own assumptions by properly testing them. In this case the bad surprises seem to be the result of bad preparation or overly optimistic assumptions, not the technology itself.

I stumbled upon the history of Erlang (and hence Elixir) written by its maker. Its first commercial use in the interwebs (non telecom) was an email server named the “mail robustifier”. I thought LR’s Fearless Leader was the only one who came up with names like that!

Inherent headaches aside, an apples-to-apples speed comparison between Elixir and C/C++ would be interesting to see. Not that it would be needed in this case though. Your off the cuff projection of a 64 core server for future growth is surprisingly light. Sounds like a very efficient code architecture.

You might like “Erlang: The Movie” then. It’s a good one.

Re: a comparison between Elixir and C++, I don’t think I’m qualified to do that… first because it would take me forever to reimplement a (less stable) subset of this in C++, and second because I’m 100% sure I would end up with subtle race conditions in it.

For what it’s worth, in my stress-test profiling, something like 95% of the CPU time is spent in the “game logic” portion of the server (despite my having tried to minimize the work the server does per client). The overhead from the networking protocol implementation is negligible, despite that being something I’ve heard people insist “had” to be written in C++ for performance. ♂️

Ah, I wasn’t implying I would stop reading the blog if I didn’t see such a comparision : ) it was a pie-in-the sky curiosity. It appears the language uses a JIT-type compilation concept to get bytecode before executing. It feels like performance might be fairly similar to a C++ version. Maybe not much opportunity cost compared to tearing your hair out to write it from the ground up in ++.

elixir is much slower than C or Rust, around 3-6 times slower than Go

Generally, after reading how fast it is I was really disappointed by how slow it was.

So if you plan number crunching – that won’t be the right choice. But for something like a game engine – I guess it is a perfect fit.

Elixir’s strength is in being “really, really” safe and “really, really” parallel. The MMO server, at its core, is a bunch of memcpys and a few queries of spatial data structures. 🙂

Hi, did you tried an architecture with containers orchestrated with kubernetes?

I think it could help a lot.

Really interesting read, thanks a lot.

Excellent article, thank you!

I’m very curious what tools did you end up using for Python interop. Would you expand a bit on this?

We use Export.Python for this. We have a GenServer that initializes itself with the python interpreter, then it can handle calls by calling into Python and returning the results. Looks something like this:

Thanks for another great insight blog, always interested in hearing how you guys

tackle technology issues.

Out of curiosity, why did you release the RakNet code you worked so hard ?

From my end this is really interesting staff but from a company with lots of competition how this adds up ?

In general, we like open sourcing stuff that isn’t core to our business model (our “special sauce,” so to speak). Since RakNet is already open source, any competitors could already use it if they were so inclined. Considering the RakNet implementation was built on top of open source (both by referring to the RakNet source itself, and various implementations in other languages that people had been so kind as to publish), it seemed like the decent thing to do.

(From a purely selfish perspective, it’s good marketing for us, and open source helps attract devs when it comes time to hire.)

And open source encourages open source developers to create open source goodies for your platform, irrespective of whether or not they want to be paid to do so! Laminar Research’s support for Linux is why I create things for X-Plane.

It’s nice to see multiplayer getting some love, I’ve only been advocating it for many years now? 🙂 Now for the $64 question, is this technology going to trickle down to the installed PC base? Or is this a mmo environment only for mobile? And will it scale so that individuals can run their own servers? I understand what you’re trying to do and I commend you guys for doing it. It’s long overdue. But what we’ve been looking for, for so many years now is a system similar to what DCS uses where multiplayer servers can be installed and run via TCP on local machines. So where do we see this going forward as far as X-plane?

Looking into it. 🙂

You’re such a tease… 🙂

This is a great article. Out of curiosity how many monthly active users does mobile currently have? And when X-Plane desktop gets this functionality, how many users do you think it would grow to?

Sorry, I’m not at liberty to divulge those numbers. Let’s just say it’s more than a hobby project, less than Facebook. 😉