X-Plane has always shipped with the shaders visible to everyone as plain text in the Resources/shader directory. Partly this was due to making it convenient to load the shaders into OpenGL itself, but we also don’t have anything to hide there either so it doesn’t make much sense to try to hide them. You are more than welcome to look and poke at our shaders and if you learn something about X-Plane in the process, that’s awesome!

However, the one big caveat to that is that we never considered the shaders to be part of the publicly accessible interface and they are in no way stable across versions. X-Plane is an actively developed product and we are making a lot of changes to the codebase, including the shaders, so you should never ever distribute a plugin or tool that modifies the shaders. Since we give no guarantee that our shaders will remain stable across versions, you’ll always be left worrying that we might break your add-on.

Additionally, there is a big change to the shader system coming in 11.30 that will definitely break all existing plugins that are modifying shaders. This blog post will cover the upcoming changes and hopefully convince everyone that the shader system is in flux and not to be relied upon as a basis for add-ons. Read More

This beta brings in many new bug fixes and heavily requested new features! As with any beta, be aware that this could break your project SO MAKE BACKUPS! We don’t think there are any drastic changes to the data model, but, better safe than sorry.

Bug Fixes

- #355 – A small UI fix relating to too many manipulator fields being shown

- #360 – A bug fix for Drag Rotate manipulators giving false negatives

- #353, #363, and #260 – All relate to warning people and correct what was allowed with NORMAL_METALNESS and BLEND_GLASS. Previously

Blend Glass was in the same drop down menu as Alpha Blend, Alpha Cutoff, and Alpha Shadow. Now it is a checkbox allowing you to correctly specify a Blend Mode and apply Blend Glass to it. Existing materials with Blend Glass will see this new checkbox automatically checked. Blend Mode will be set to Alpha Blend or, if your plane is old enough to have been worked on during X-Plane 10, it will be set to whatever it was back then.

See the internal text block “Updater Log” for a list of what got updated, including this. You may see, for example:

INFO: Set material "Material_SHADOW_BLEND_GLASS"'s Blend Glass property to true and its Blend Mode to Shadow

- #366 – An Optimization! Useless transitions in the OBJ were being written, now they’re not. Custom Properties still work, there won’t be any visual changes to your OBJ. We haven’t done any profiling but it might have decreased OBJ loading time by a small amount too.

Features

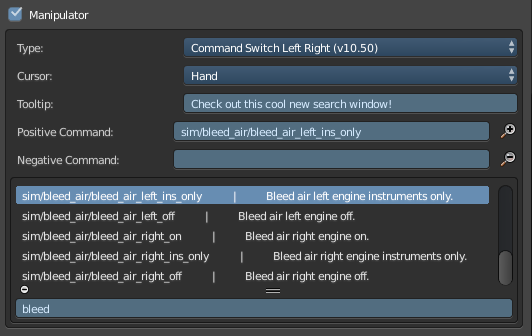

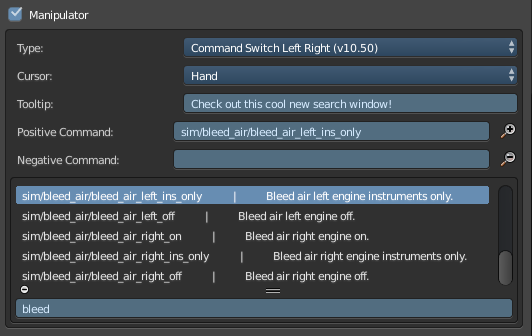

Command Search Window

Thanks to #361, just like the Datarefs.txt Search Window, we now have the same capabilities for searching Commands.txt (for manipulators). We are shipping with X-Plane’s latest Commands.txt file, but of course you can replace it with your own (as long as you keep the name the same). One day we hope to make it much more flexible.

Particle Emitters (not very useful to most yet, I know)

Thanks to #358, some people who have access to X-Plane’s cutting edge particle code can use XPlane2Blender to specify particle emitters. Don’t worry, we’re all working as hard as we can to get these into the hands of others. Fortunately, XPlane2Blender users can hit the ground running the minute it drops!

Build Scripts And Test Runners

- #302 and #307 – Are you a professional XPlane2Blender maintainer and developer (if so we should probably talk!) Then you need a better build script, and a test script to match! Introducing

mkbuild.py, the build script for the modern developer! It creates, it tests, it renames without messy mistake prone human intervention! To top that off, how about a testing script that doesn’t give false positives!

A quick correction – the X-Plane Live session will actually be today, Tuesday at 4 pm (20:00 UTC) – I apologize for the total confusion and chaos on this one. We have your questions from the developer blog and social media, and we’ll try to take live questions as best we can.

EDIT: T-minus 30 minutes to live stream! We’ll be live here on YouTube.

EDIT 2: If you missed the live stream, you can watch the recording: Part 1 and Part 2 (split due to technical difficulties).

I meant to post this weeks ago, and basically just lost track of it, so thanks for the reminders in the comment sections. Here is a PDF export of our slide deck from FSExpo.

Flight Sim Expo 2018 Slide Deck

There is professionally shot video of the event, but it wasn’t shot by us, and I’m afraid I have no idea when it will be posted. We’re looking into using our own cameras next year so that we can ensure a video post on a more timely basis.

X-Plane 11.25 release candidate 1 is live. If you were using an 11.25 beta, you’ll be auto-notified to update. If you’re not using the beta, you can check “get betas” to get the beta. (Steam users: 11.25b1 is available as a Steam public beta – we’ll put the release candidate on Steam early next week if nothing blows up.) Release notes are here.

This update includes the addition of the “High Roller” Ferris wheel/rotating bar to the strip – when we were at FSExpo, the High Roller was very close to the Flamingo, and it was quite clear how visible it was to the skyline.

Austin, Philipp, Alex and I presented a road map for X-Plane at FlightSimExpo this year. The official video of our talk is still not available – it’s apparently in post production. There is an audience video that does have intelligible audio, but this post provides a summary of some of the new things we presented. Read More

X-Plane 11.20 is, of course, final! Like all good Klingon software, it was not so much released – rather it escaped captivity, leaving a trail of blood in the path of all whom opposed it.

I am aware of two plugin-related issues we are tracking:

- Some legacy plugins with widget UIs are missing buttons when the UI is at 150% or 200% scaling. We have a fix for this, but for now you can work around the problem by turning off UI scaling in the graphics settings tab.

- Some Mac plugins compiled against libstdc++ crash with the Steam version (but not the non-Steam version).

Philipp and I are still discussing what to do about this second thing, but if your plugin is in this category (so far we’ve seen it with HeadShake and X-Ivap), my suggestion is: compile and link against clang’s libc++ on OS X – it’s the native Mac C++ runtime and the one that’s going to work well in the long term.

We’ll release an 11.21 release candidate either late this week or early next week, once we collect the bug fixes that got away.

EDIT: HeadShake has been fixed by SimCoders!

X-Plane 11.20r2 has only one bug that we are trying to fix: a bug in the plugin SDK that can cause some plugins to crash when creating and destroying windows.

Tyler and I dug into this and found that the fix was a bit more intrusive than we wanted for this late in an RC.

So: if you are a plugin developer and you are working with the new SDK, either for VR compatibility, to use the new 3.x APIs, or just because you are updating the plugins, and you have time this weekend, please email me and I can send you our new fixed XPLM DLLs.

My hope is to get half a dozen plugin developers to bang on them over the weekend, so that when we cut 11.20r3, it really will be the release.

Edit: RC3 is live – thanks to everyone who helped!

We received a last minute bug report that X-Plane 11.20r2 deletes scenery packs from scenery_pack.ini. This is true! It is also by design, not a bug, the same as X-Plane 10, and not particularly brilliant on my part.

scenery_packs.ini persists the order of your packs (putting newly discovered packs “in front” in alphabetical order upon discovery) and maintains enable-disable status. But it does not retain any other information. The file is totally rewritten on every run and the following otherwise useful stuff tends to get destroyed in the process.

- Comments and notes to yourself

- Whitespace and formatting

- Any scenery packs that can’t be found

Unfortunately this means that if you symlink to an external drive that is unmounted and run X-Plane, your scenery pack order gets lost. This definitely does suck.

In a future version, I can fix this. But we’re not going to mess with it now during RC because it’s totally not changed or broken compared to all past versions of X-Plane since we introduced the scenery_packs.ini file.

For now: you can lock the file as a hack-around to preserve your well-created order while your remote drive is unmounted – when you re-mount and restart, your order isn’t lost. But in this order, new packs aren’t persisted to the file, although they will be used.

If there’s things you want the scenery_packs.ini file to do, commenting on this post would be on-topic. One thing to consider: if we can’t drop missing packs, how do we know a pack was uninstalled? How does the file ever get cleaned?

In the X-Plane 11.20b1, we’re shipping a web browser for the first time. We’re using the Chromium Embedded Framework (CEF)—essentially the same guts as Chrome, wrapped inside X-Plane.

For the time being, it’s being used in one place only*: to support in-app upgrades, so that if you have the demo, you can buy the full version of X-Plane without having to go to the web site. The web view is seamless—you can’t tell by looking at the app that it’s not just part of the native X-Plane user interface.

While its present use is quite limited, if all goes well, we’d like to expand its use elsewhere in the sim—in The Glorious Future™, we could potentially use it to load online charts and the like.

But, there’s a hitch!

There’s not a good way to load two copies of CEF at a time. And, since some plugins (like the ones used in the new Flight Factor A320 Ultimate) depend on a version of CEF provided by a (global) plugin, we have to disable X-Plane’s web browser functionality in the presence of that plugin. This isn’t a big deal right now—after all, if you’ve installed payware, you probably already own the sim anyway—but it will be a shame if, in The Glorious Future™, you have to choose between your payware and core X-Plane functionality.

The situation is even worse than you might expect: CEF absolutely doesn’t support multiple initialization calls in the lifetime of an app—you can’t initialize it, shut it down, then re-initialize it. That means it’s not even possible for plugin authors to be good citizens and “relinquish” CEF to X-Plane, such that you could at least use X-Plane’s browser whenever you’re not using an add-on that depends on it. It’s all or nothing! In fact, you’ll have to uninstall the CEF plugin before X-Plane can use a web view itself.

There are two possible fixes here:

- At some point in the future, we provide access to CEF via the plugin SDK. If we did, it would have to be to the C API only—no fancy C++ wrappers for you. Speaking as someone with the memory of writing for the C API fresh in his mind, let me say: this isn’t a whole lot of fun, and it’s rife with the potential for memory leaks. Moreover, this would break any existing addons that depend on CEF—they would be forced to migrate to the SDK version of the API.

- We could (theoretically) compile an X-Plane-specific version of CEF that would not conflict with the version plugins are using. This would require renaming all the Objective-C symbols on Mac, and renaming the DLL on Windows. I’ve not investigated this for feasibility, but it’s certainly theoretically possible. This would allow X-Plane’s version of CEF to coexist with (one) plugin-provided copy, albeit at double the CPU and memory cost.

Having us expose CEF via the SDK is not an unequivocal win. CEF is notoriously incompatible between versions—you can more or less guarantee that even minor updates will break compatibility of the API somewhere. That means X-Plane would be stuck at a fixed version of CEF for at least the lifetime of a major version to prevent breaking plugins. X-Plane 11.20 uses CEF release 3202; it would be at least the next major version before we updated CEF to a newer version. There are two major downsides to this:

- When X-Plane updates to that newer version, it would break plugins compiled against old versions of the SDK that depend on CEF. That’s a lot more aggressive than our normal deprecation policy, and it makes CEF plugins a potential maintenance headache for plugin devs.

- If your add-on really, really needs features from the latest and greatest CEF release, you’re out of luck—wait a couple years (!!) and maybe we’ll update.

So, here’s what I’d like to hear from plugin devs:

- Are you currently, or are you planning to use CEF in the future?

- Would you be willing to use the C version of the CEF API only?

- Would you be able to accept the limitations and potential headaches (outlined above) of X-Plane-determined CEF versions and compatibility?

If you don’t mind telling me a bit about your intended use cases, that’d be very helpful as well!

EDITED TO ADD: There’s a third option here that I didn’t consider: X-Plane could provide a “wrapper” around CEF that offers “just” a browser surface, and simple interfaces like the ability to change the URL programmatically. This has the advantage that we could update CEF regularly without breaking the API (though there’s always the risk that a new version of Chromium would change how it handles your HTML + CSS + JS). The disadvantages are twofold:

a) If a plugin developer really, really needs the full power of the CEF API, they’re out of luck (or we’re back to square one with respect to having to disable core X-Plane functionality in order to support the plugin).

b) New XPLM APIs are a massive tax on our development time. Every time we need to make a change, we have to go test a dozen plugins… then go through an extensive beta… then inevitably hear about a show-stopping bug we introduced three hours after a release goes final. 😀 In contrast, providing a (major-version-stable), transparent copy of CEF costs us very little dev time; time that would otherwise be spent maintaining the XPLM API can be spent on, like, major features.

* Aside: This is actually how we test a lot of new tech in X-Plane: we find a single, relatively out-of-the-way place to make use of it, ship it in a major version update, and wait to see if it blows up. Betas catch a lot of bugs, but not all, so this is a way of hedging our bets when it comes to code that hasn’t been battle-hardened. This is how we worked the bugs out of the user interface framework (Plane Maker’s panel editor was the testbed), the X-Plane 11 particle system (behind the scenes, it was used for some very minor effects in X-Plane 10.51), and more.